Provide flexible provisioning models, including support for Spot VMs, which offer up to 91% savings versus regular compute instances, and custom machine types. Bring your scripts or containerized workload. Support common job types including arrays of jobs and multi-node MPI jobs utilizing task parallelization. In collaboration with NVIDIA, Batch supports the use of NVIDIA GPUs when running demanding batch workloads such as ML training, HPC, and graphics simulation. Batch supports all CPU machine families including the newly released T2A Arm instances Batch supports throughput-oriented, HPC, AI/ML, and data processing jobs.

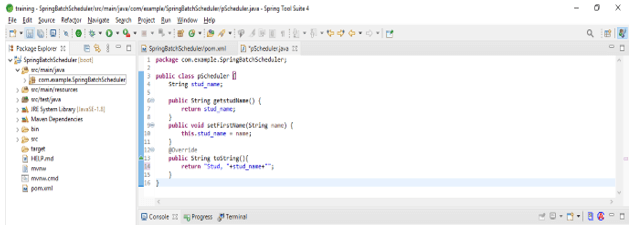

Here are just a few examples of what Batch can do: In short, Batch allows developers, admins, scientists, researchers, and anyone else interested in batch computing to focus on their applications and results, handling everything in between. You can access the service through the API, the gcloud command line tool, workflow engines, or via the easy to use UI in Cloud Console. It manages the job queue, provisions and autoscales resources, runs jobs, executes subtasks, and deals with common errors - all automatically. The Batch service handles several essential tasks. These services include Cloud Life Sciences (formerly Google Genomics), Dataflow, and Cloud Run Jobs. Batch is a general-purpose batch job service and the latest in a long list of products we’ve created over the years that process jobs to help enterprises migrate their workloads to the cloud. Batch jobs are especially prevalent in areas such as research, simulation, genomics, visual effects, fintech, manufacturing and EDA.Īs enterprise customers turn to cloud to meet the need for more compute resources or to easily access the latest processors or GPUs, they bring their batch workloads with them. Enterprise workloads very often include some batch processing elements. Batch uses resources very efficiently and remains the preferred way of running jobs that don’t need much human interaction. Import .Today, we’re excited to announce the Preview release of our fully managed service, Batch, which provides easy access to Google Cloud’s computing power and scale.īatch processing is as old as computing itself, with the term 'batch' dating back to the punchcards used by early mainframes. Import .config.AutowireCapableBeanFactory You can use this SpringBeanJobFactory to automatically autowire quartz objects using spring: import There is a pretty useful DZone article about using rabbitmq via spring-integration (a set of prebuilt pattern implementations that help with connecting things to each other). You can assign one or more threads to the listener, meaning that you should find it easy to tune the performance of the report generator. You'd have a custom listener on the queue, passing requests to a report generator whenever it runs. With the jms architecture you'd post user requests to the queue, which you'd configured to be persistent. For a messaging service I'd look at RabbitMQ, because again it's pretty simple. If you want to make it easy on yourself I'd recommend using spring-jms as a wrapper around the basic Java EE JMS api - the spring wrappers are simply simpler than basic jms. Given that you talk about queues and their persistence however it sounds a lot like your problem would fit into a simple jms model.

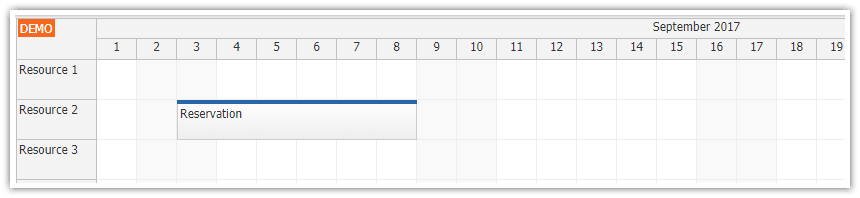

They aren't competing technologies, they are complimentary. You could use quartz to schedule batch jobs to run at specific times. So, quartz is a scheduler and batch is a process. You would use a quartz job if you wanted to check for new jobs every few seconds/minutes/hours/etc, and process one/many of them at that specified time interval.

You would use spring-batch if you wanted to process all report requests at the same time, perhaps at night when your servers are not otherwise occupied processing real-time user requests (or even during the day during slow periods).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed